What brings down a high-density server rack faster: heat, or the cabling that traps it? In modern data centers, both can quietly erode performance long before alarms ever sound.

As compute density rises, every misplaced cable bundle can disrupt airflow, create hot spots, and turn routine maintenance into operational risk. The rack is no longer just a container for hardware-it is an engineered environment where layout decisions directly affect uptime.

This article examines how disciplined cable management and targeted cooling strategies work together to support reliability, serviceability, and energy efficiency. From airflow preservation to thermal containment, the smallest physical choices inside the rack often have the largest operational consequences.

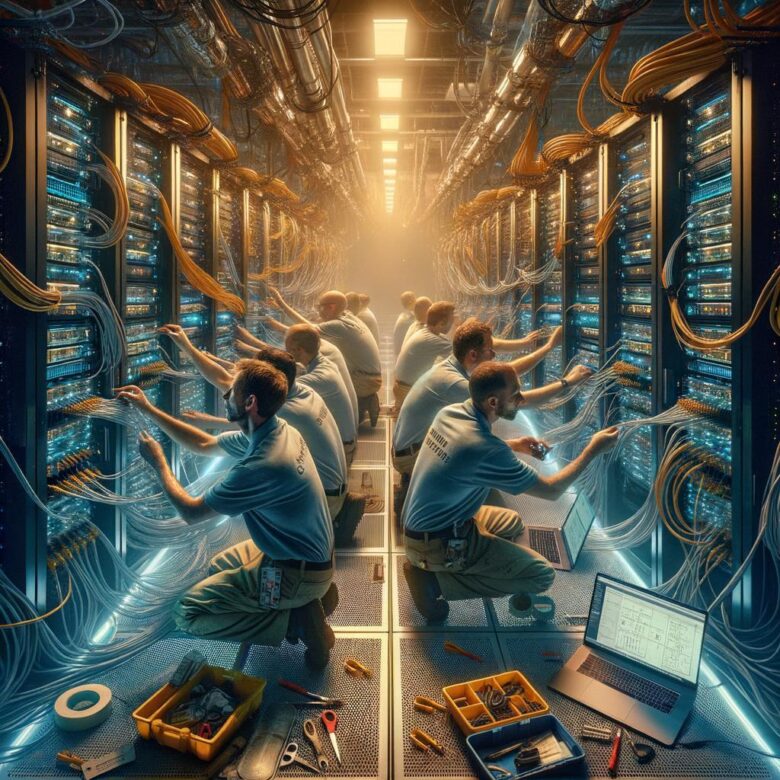

What Effective Cable Management and Airflow Control Look Like in High-Density Server Racks

What does “effective” actually look like when a rack is packed to the limit? It looks boring in the best way: clean cable paths, visible labels from the aisle, no bundles sagging across exhaust zones, and nothing blocking the perforated doors or the intake face of 1U and 2U servers.

In a well-managed high-density rack, power and data are physically separated, vertical managers are doing most of the work, and patch lengths are intentionally short rather than “close enough.” You should be able to trace a link without moving six others, and a failed PSU should be replaceable without cutting zip ties or disturbing adjacent cords.

- Front of rack stays shallow and disciplined; excess slack lives in side or vertical pathways.

- Rear cable bundles follow rack geometry, not the easiest improvised route.

- Blanking panels, brush strips, and sealed floor openings support pressure control instead of fighting it.

A quick real-world example: after a top-of-rack switch refresh, I’ve seen ports patched with longer jumpers “just for now,” creating a curtain across hot exhaust. Intake temperatures looked fine at first, but localized recirculation pushed a few nodes into higher fan states before room sensors showed anything unusual. That is how small cable mistakes become cooling problems.

One more thing. The best racks are serviceable under pressure, not just tidy on install day. Teams using NetBox for port documentation and infrared checks with a FLIR camera usually catch trouble faster, because they can compare logical design against what the rack is physically doing.

And honestly, if a technician hesitates before pulling a server because the cabling looks risky, the rack is not under control.

How to Design Rack Layouts, Cable Paths, and Cooling Zones for High-Density Deployments

Start with the power map, not the rack drawing. In high-density rows, layout decisions should follow breaker limits, PDU placement, and airflow direction, otherwise you end up forcing cable routes around heat problems you created on paper. A practical workflow is to model rack elevations in NetBox or Sunbird DCIM, then mark top-of-rack switches, dual PDUs, and the heaviest servers before assigning any patch path.

Keep the front face clean. Use one side of the rack for data and the other for power, but more importantly, reserve vertical cable managers based on actual bundle growth, not day-one port counts. A 48-port switch feeding dense virtualization hosts can double its cable fill after a storage refresh, and that is where layouts usually fail.

- Place high-draw nodes low to stabilize the rack and shorten whip lengths to floor or overhead power.

- Keep leaf switches and patch fields near the top to reduce crossovers and preserve front-to-back airflow.

- Design cable paths with service loops outside the hot aisle, never behind deep servers where they block exhaust.

I have seen this more than once: a row passes commissioning, then overheats after a simple MAC because new patching spills into the rear air path. It looks minor until inlet temperatures drift rack by rack.

Cooling zones need a boundary, not a hope. Group racks by thermal behavior-GPU, storage-dense, general compute-and avoid mixing them in the same containment segment unless CRAC supply and return capacity were sized for the worst case. If one 42U rack is packed with 1U GPU servers, leave adjacent rack space for breathing room or use chimney cabinets; otherwise your “available” rack next door becomes unusable long before it is full.

Common Rack-Level Cooling and Cabling Mistakes That Reduce Performance and Raise Thermal Risk

Most rack thermal problems are created by small layout decisions, not failed CRAC units. The common one is dressing patch cords and power whips across the front U-space, which disrupts intake velocity on 1U and 2U servers long before anyone sees a high inlet temperature alarm. I’ve seen racks pass room-level checks in DCIM and still throttle CPUs because a dense bundle sagged in front of the top third of the chassis.

Another mistake is mixing cable length “just in case” with high-density switching. Excess slack stuffed into vertical managers or looped above top-of-rack switches forms a warm pocket exactly where transceivers and switch ASICs already run hot. It looks tidy from the aisle, sure, but the exhaust recirculation it creates is easy to miss unless you verify with a thermal camera or sensor data in Sunbird DCIM.

- Leaving unused rack spaces open: blanking panels are often skipped during fast installs, and hot exhaust shortcuts back to server intakes.

- Running A and B power feeds in the same side channel: one overloaded pathway becomes both a serviceability problem and a localized heat source.

- Using deep cable managers on shallow racks: they can crush bend radius, crowd rear exhaust, and make fan modules harder to replace under load.

A quick real-world one: after a storage expansion, a team added new twinax runs and neatly zip-tied them into a rigid rear bundle. Good intentions. The bundle sat directly behind high-RPM compute nodes, static pressure rose, and the rack developed a repeatable hot band at mid-height during backup windows.

The fix is rarely dramatic-shorter measured cables, hook-and-loop instead of tight ties, strict blanking discipline, and rear-door clearance checks before every add/change. Ignore those details, and the rack slowly turns into its own heat trap.

Summary of Recommendations

Effective high-density rack design comes down to discipline: treat cable management and cooling as one operational system, not two separate tasks. Clean routing preserves airflow, and stable airflow protects performance, uptime, and hardware life.

- If uptime is the priority, invest first in airflow containment, structured cabling paths, and continuous thermal monitoring.

- If growth is the priority, choose modular cable infrastructure and cooling capacity that can scale without rework.

- If cost control is the priority, target the hotspots, congestion points, and power-dense racks before expanding facility-wide.

The best decision is rarely the most complex one-it is the design standard your team can maintain consistently under real operating conditions.

Dr. Silas Vane is a telecommunications strategist and digital infrastructure researcher with a Ph.D. in Network Engineering. He specializes in the evolution of SIM technology and global connectivity solutions. With a focus on bridging the gap between hardware and seamless user experience, Dr. Vane provides expert analysis on how modern communication protocols shape our hyper-connected world.